All opinion articles are the opinion of the author and not necessarily of American Military News. If you are interested in submitting an op-ed please email [email protected]

Driverless cars will be on the road within a decade, affecting nearly every aspect of American life. While the economic consequences require much consideration, just as important are the ethical questions that come with this new technology. What follows is a discussion of how the free market can answer those questions as decisions of life and death are made by brains of silicon.

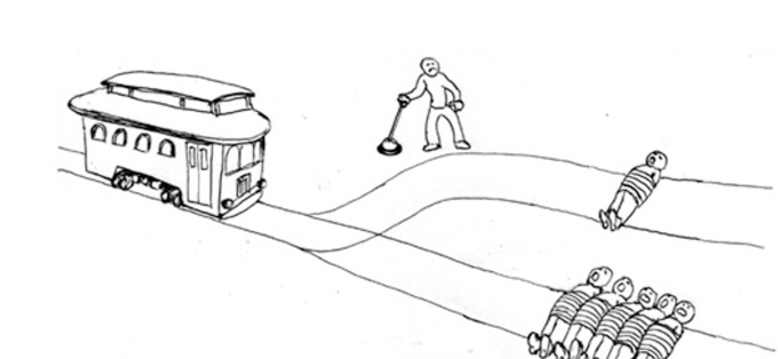

The classic philosophical illustration of the situation is the “Trolley Problem,” depicted above. Five people are bound to a track, with a trolley coming. Up the track, a man has the ability to pull a switch, diverting the train to a secondary track which would result in the death of only one person. If the man does nothing, letting the “fates” decide, he passively watches as five people die. However, if he pulls the lever, taking an active role, he saves the five while sacrificing the other person.

Much has been written about this problem, and I won’t detail its myriad moral and situational permutations. What is certain however, is in the very near future machines will be in positions where they need to make these decisions, and there exist profound disagreements among moralists and ethicists about what the “right” decision is in each situation with principled ethical arguments for each course.

The free market offers a mechanism to resolve these differences. The owner of a driverless car or fleet should be able to choose the “ethics” of their driverless car’s software. One can easily imagine a dial which increases the level of “self preservation” on the car’s operating system, similar to adjusting the touch sensitivities on a smartphone.

Further, one can easily imagine competing operating systems which offer more flexible or more rigid “ethical constraints” with more complex or feature filled operating systems costing more. In conjunction, the insurance market will undoubtedly evaluate each type of software and feature set differently. A more “self-interested” AI will cost more to insure, as it will be inherently less flexible than those with the potential to sacrifice the life of their passengers. However the market develops, consumers must be given the choice in how to weigh the variables.

This future is swiftly approaching, and it is critical that the voice of consumers be recognized with the the opportunity to choose options which fit their tastes. Should we not have a say in how our driverless chauffeurs value our lives, rather than ceding this decision to the the technical elites?

John Mantia is a Senior Analyst at a Hedge Fund in New York. Born and raised in St. Louis, he is a graduate of Fordham University’s Gabelli School of Business.

[revad2]